What the EU AI Act demands from organizations

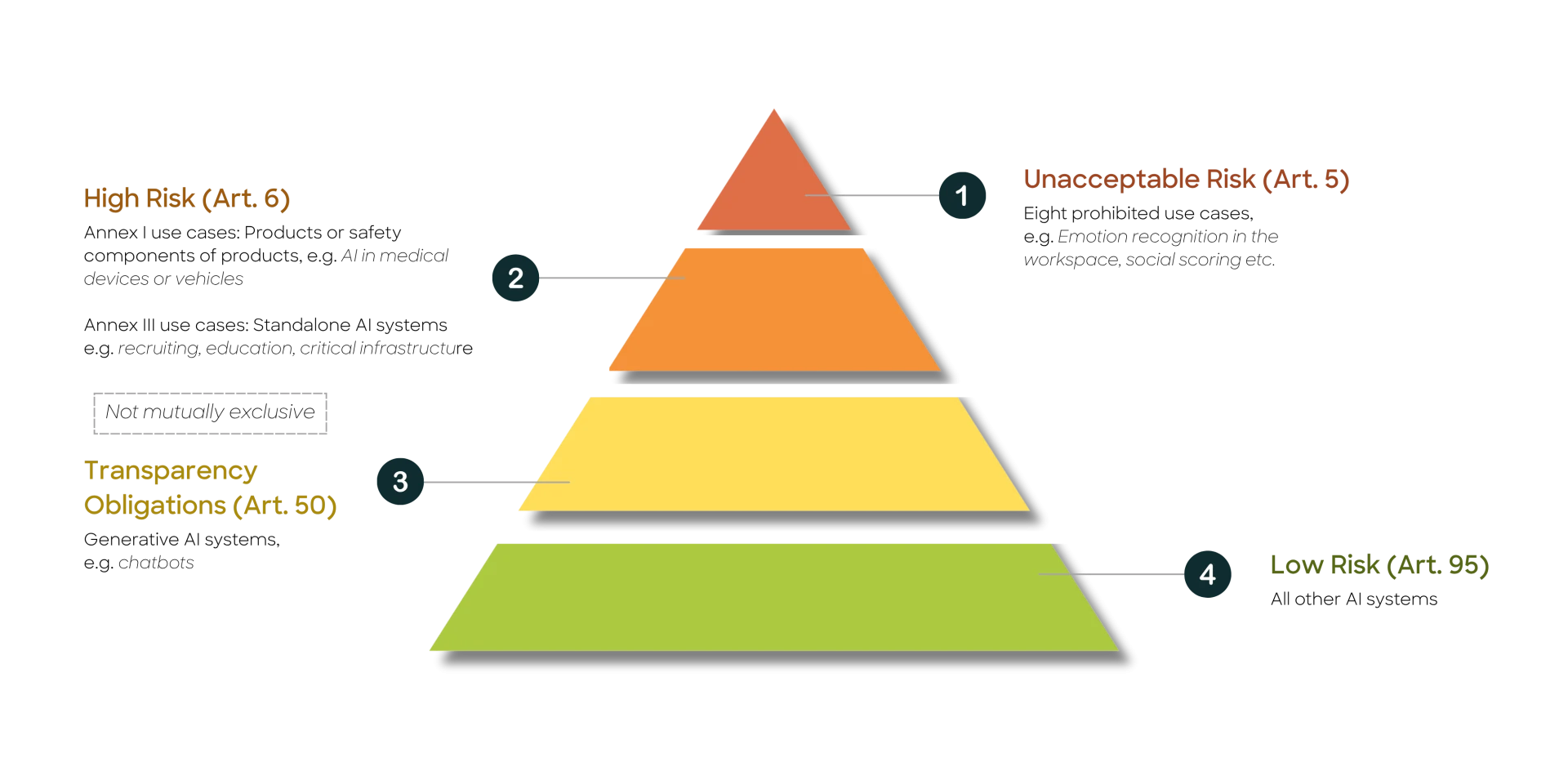

The EU AI Act classifies AI applications by risk level – from low risk to outright prohibited. Particularly where AI is used in sensitive domains such as healthcare, human resources, or public administration, strict safety and compliance requirements apply

The four risk classes of the EU AI Act – from prohibited to low risk.

For high-risk AI systems – such as those used in recruiting, credit scoring, or critical infrastructure – the law specifically requires:

- a continuous risk management system (Art. 9)

- clear data quality and data governance requirements (Art. 10)

- automatic event logging to ensure traceability (Art. 12)

- transparency and instructions for use for downstream actors (Art. 13)

- effective human oversight mechanisms (Art. 14)

- evidence of accuracy, robustness, and cybersecurity (Art. 15)

Many organizations face the same practical questions: how do we implement Articles 9–15 at the use-case and organizational level? How do we govern the compliant use of generative AI? Which roles and processes are essential for legal and technical requirements? And how do we embed risk management into our operational processes?

Risk classification: Where do organizations actually stand?

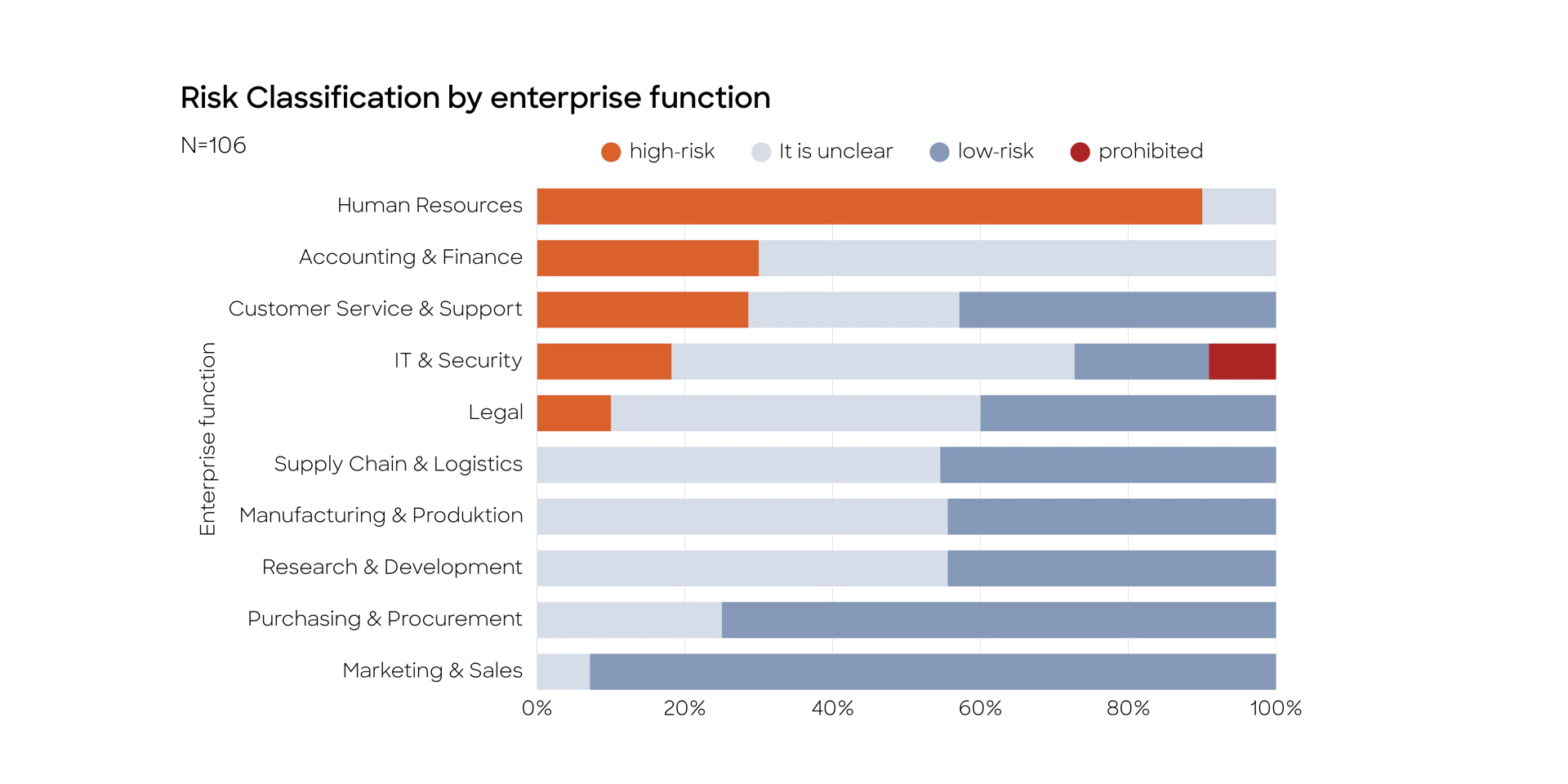

One of the greatest practical challenges is risk classification. appliedAI analyzed more than 100 AI systems across various enterprise functions – with a sobering result:

- 18% of systems could be clearly identified as high-risk

- 42% clearly fell into the low-risk category

- For 40%, the classification remained unclear

Risk classification by enterprise function – HR has the highest share of high-risk systems, Marketing & Sales the lowest.

In the worst case, up to 58% of all analyzed systems could be considered high-risk. The areas most affected are HR, Accounting & Finance, Customer Service, and IT & Security.

The root causes of this uncertainty often lie in ambiguous definitions within the law itself: what counts as a "task" under the AI Act? When does a system act "on behalf of a law enforcement authority"? Where exactly is the boundary for critical infrastructure? These open questions hinder investment decisions – and slow down AI adoption across Europe.

What organizations need to know now

The AI Act applies not only to AI providers, but also to organizations that deploy AI systems. Obligations vary depending on risk class and role – as a provider or as a deployer. The key priority is therefore: classify early, clarify responsibilities, and build governance structures before the deadlines take effect.

For mid-sized enterprises in particular: the AI Act does not have to be a barrier. It offers an opportunity to systematically improve AI processes, build trust with customers and partners, and scale high-quality AI applications with greater planning certainty.

How organizations can benefit from the AI Act

Implemented correctly, the AI Act offers genuine strategic advantages:

- Strengthen internal AI processes and risk awareness sustainably

- Build trust with customers, partners, and regulatory authorities

- Scale high-quality AI applications with confidence

- Embed ethical technology use as a competitive differentiator

- Lay the foundation for global AI competitiveness

How appliedAI supports implementation

appliedAI supports organizations across three core areas:

AI Act Governance

We help map your AI risk landscape, define responsibilities, and establish scalable governance processes – from executive briefings and the development of an AI Act roadmap to building an internal risk management system that accounts for standards such as ISO 42001.

AI Competence

Article 4 of the AI Act requires organizations to ensure that their staff have sufficient AI competence. Our AI Competence Training Compact (45 minutes, fulfills Art. 4) and role-specific EU AI Act Learner Paths for technical professionals, AI coordinators, and AI users help meet this requirement efficiently and at scale.

AI Act Engineering

Trustworthy AI must be built to be compliant from the ground up. We support organizations in establishing the technical infrastructure – from data governance and automated logging to human oversight mechanisms and a compliant-by-design approach for AI use cases. From planning to production.

Want to implement the EU AI Act securely in your organization?

We'll show you how – and support you wherever you need us.